AI Forecasting Evaluation

Approaches for short-term feedback on long-term predictions

(Note: This post is dry, technical, and mostly meant as a reference for future posts. You’ve been warned)

Something that Croesus understood, even 2,500 years ago, was that you need some way to evaluate the quality of your oracles before using their forecasts to make important decisions. Unfortunately for him statistics hadn’t been invented, so he relied on a straightforward approach of receiving a single prophecy from several oracles and going with whichever was the most impressive. Hopefully we’ve learned a bit since then and can do better when evaluating our new AI oracles.

Because I have several experiments to run with an AI bot forecaster, I need some way to quantify how the different versions of the bot are performing. This post is going to get a little in the weeds, but I want to lay out these ideas because they are going to be important for many of the experiments I’d like to run.

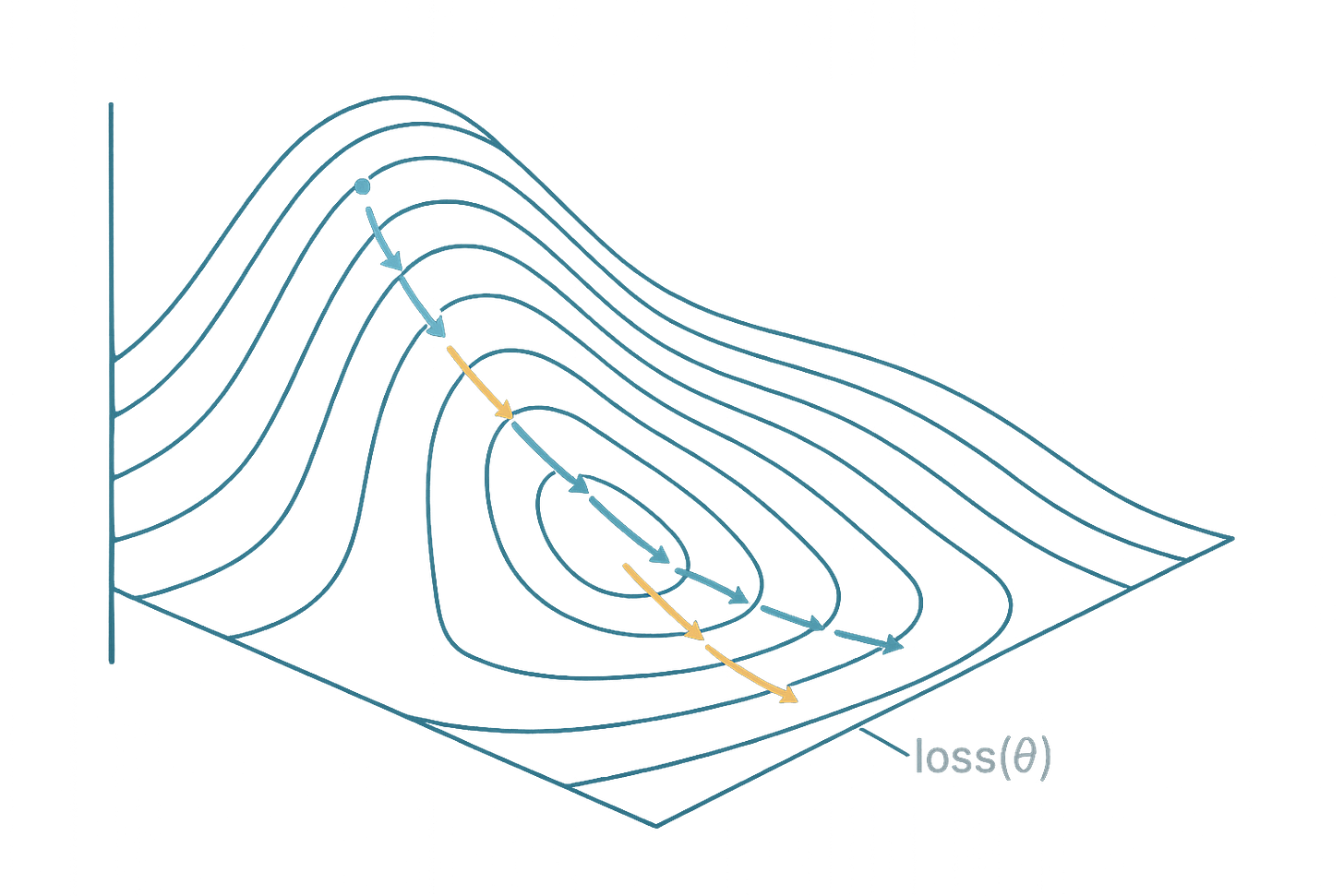

When evaluating human forecasters, the gold standard is to run long-term tournaments like this one and wait (sometimes years) for results to come in. There are some lower investment approaches that could be used, but none are effective at measuring what we really care about: long-term forecasting accuracy. Unfortunately this temporal limitation makes it challenging to iteratively improve forecasting performance, as the gap between any change and eventual feedback is prohibitively large. This is a major issue for developing any kind of automated system to eventually outperform (or even match) human forecasters.

We would like some way to speed up this process. Metaculus is running short, 2-week mini tournaments throughout the fall as part of the AI forecasting project. These are very helpful, and I expect to have more to say about some of the recent ones soon. But there are a couple issues with using this as our primary form of feedback: the questions are of a limited variety, all predictions necessarily resolve within a 2 week time span, and because there are few questions (and mostly binary) they don’t provide a ton of statistical power. Ideally we would supplement this process to both get some form of immediate feedback for refinement and provide an alternative set of data points.

Leverage Points

The automated nature of forecasting bots opens some potential avenues to exploit that wouldn’t be possible using human forecasters. Below is a short survey of these approaches, including the benefits and drawbacks of each. This list is not exhaustive, but covers some of the most common and high leverage opportunities.

Scaling

Predictions can be made in seconds or minutes, so a large volume of questions can be predicted in a short period of time. This component can, and usually is, combined with any of the other approaches below to improve statistical power.

Benefits of scaling: Comparing predictions across a very large number of questions gives better statistical power, making us more confident that certain approaches work better. The implementation of scaling is also straightforward, as the same pipeline can be run essentially in a loop.

Drawbacks of scaling: Though individual predictions are relatively inexpensive (on the order of 5-15 cents in my current model), this cost can become an issue when predicting hundreds or thousands of questions. In addition, this approach is limited by the number of relevant questions available. This may also introduce a bias in favor of models that are generally good across a wide array of questions over those that are more narrowly effective within a certain category or type of question.

Resources: ForecastBench which provides a regularly updated list of 1,000 forecasting questions that have not been resolved. Results are graded nightly, using a combination of true question resolution and intermediate outcomes.

Parallel Testing

Similar to scaling, many iterations of a bot can be created and run in parallel forecasting on the same set of questions. Again this can be combined with other approaches.

Benefits of parallel testing: A large number of variations in strategy can be tested simultaneously, maximizing the amount learned per unit of time. This also allows for more generalist insight, such as understanding the variance inherent to any given approach or the general lift provided by incorporating a certain model or news source, for instance.

Drawbacks of parallel testing: Again, cost will scale in proportion to the number of model variations tested, though there are some approaches that can reduce this cost. For example, if testing variations in a certain prompt that is part of a multi-stage process the non-varied steps can be cached rather than relying on a fresh API call every iteration. We also might expect survivorship bias that comes from running a large number of models, though this can be accounted for using techniques like bootstrapping to get a sense of the variance in performance. Finally, parallelizing models to test is slightly more complex than feeding in a large number of discrete questions. It requires an infrastructure to support the changing and tracking of many bot parameter changes, and a way to robustly analyze the results of those changes.

Resources: There are a large number of existing tools for automatically varying certain parameters, or changing features such as prompts, which can make this process more efficient. Or these can be designed from scratch without too much headache.

Back Testing

The data available to bots can be limited, for example by training models only on data and news generated before a certain cutoff date. This allows for backtesting, which is where predictions are made about past events that have already resolved (but where the model does not have the answer) to get results immediately rather than waiting for time to pass. This approach is commonly used in many machine learning prediction tasks, like quantitative equity price forecasting. While this method is extremely valuable, the drawbacks are similarly extreme.

Benefits of back testing: This approach provides a much faster feedback cycle as experiments can be run continuously and changes can be made in reaction to those experiments. This enables approaches like tuning or reinforcement that are common in other machine learning applications.

Drawbacks of back testing: The most significant drawback is that this approach is technically demanding and cost prohibitive. To enable back testing a model must either be trained from scratch (which also requires access to a clean dataset containing only information before a certain date cutoff), or a pre-trained model must be used with a known information cutoff. The first of these is best, but because the cost of training frontier quality LLMs is extreme (>$100 million for GPT-4) it is not realistic outside of very valuable business use cases. Alternatively less expensive and smaller models can be trained, or older models with a known training cutoff can be used, but as a rule these models are less effective than leading models and in many applications any refinement (e.g., scaffolding, fine tuning, prompt engineering) is swamped by the benefit of using larger scale models. Finally, back testing must exclude web search as this provides an obvious avenue for data contamination that would render the test meaningless. Because web search is a key component of good forecasting, any approach relying on back testing will be necessarily limited.

Aggregate agreement

While the final outcome of a given question cannot be known until resolution, many questions have aggregated community predictions that combine forecasts from many forecasters. In general these community predictions are quite accurate, much more accurate than the median forecaster though often less accurate than the best performing individuals. One approach may be to use the community forecast as a proxy for the “true” probability of the event at a given point in time. This allows for an immediate feedback loop for comparing models, and can also be tracked across time to see whether the forecast moves in a manner consistent with the bot prediction.

Advantages to aggregates: Instant feedback allows for a much quicker development cycle. In general these aggregates provide a fairly good target, as a bot that perfectly matched this community aggregate would likely perform in the top 5% of individuals. More diagnostic information available that can be incorporated into scoring by comparing probability distributions rather than fixed outcomes.

Disadvantages to aggregates: Aggregate forecasts are only a proxy for true outcomes, which imposes a natural ceiling on forecasting quality. A bot over-optimized on this metric could definitionally never outperform the aggregate community forecast. Imagine a version of this bot that simply goes online to look at the forecast and then reports that value. Obviously this would not be very useful as a forecaster. There are also limitations imposed by requiring a certain number of human forecasts (necessary for an accurate community aggregate) that may bias the sample towards more popular topics and questions. Lastly, web search tools provide an avenue for data leakage, as bots may gain access to the community aggregate directly.

Resources: The Metaculus maintained forecasting-tools python package contains a community comparison benchmarking tool which abstracts a lot of the work required to run this (on Metaculus questions). This uses the expected baseline score, which is a scaled version of the expected log score assuming the community prediction is the true probability.

Interim Endpoints

Predictions can be partially resolved based on interim endpoints. This approach is sometimes used in clinical trials that have very long-term outcomes (such as developing Alzheimer’s disease) that are not practical to measure. An example of this for forecasting might be: if on September 1st a model predicts there will be 15,000 flu cases before the end of the year, but on October 1st we already see that there were already 10,000 flu cases in September alone, we can be fairly confident that the model has undershot.

Advantages to interim endpoints: Unlike the community forecast, interim endpoints reference some ground truth or model result that in principle can exceed human forecast accuracy. This goes some way towards eliminating the ceiling effect, while still allowing a faster cycle of resolution than can be achieved by waiting for the final result.

Disadvantages to interim endpoints: The most significant issue with this approach is it is necessarily limited in scope. There are only a small fraction of questions for which logical interim endpoints make sense (e.g., cumulative count questions, stock prices or other numerical predictions). This limits the applicability for a generalist prediction bot, though there may be some value in more specialized bots specifically addressing these questions. A second issue is that this approach is effort intensive. A proxy endpoint must generally be defined individually for each question, and it is not obvious how this can be automated.

The Issue is Always Uncertainty

While there are a number of different levers to pull on to take advantage of automated forecasting bots strengths, none of these are a slam dunk and they all come with pretty significant drawbacks. To make matters worse, it can be difficult to tell the difference between forecasters of different skill levels even in an ideal world. Here is a helpful (though somewhat demoralizing) blog post on this question from Metaculus. To steal the punchline, an ideal forecaster (who always reports exactly the true probability) beats a middle of the road forecaster (whose forecasts are on average off by 12.5%) about 92% of the time in a 100 question tournament. These are pretty good but not great odds, and if we want to reach 80% power on this experiment we end up needing to ask about 200 questions. Parallel testing and scaling make this doable, but it does impose a cost constraint that prevents us from running an unlimited number of experiments.

One thing about this simulation study is the performance of all models is relatively good. The Brier score for the worse tested model was 0.196, compared to 0.167 for the ideal model. To put this in perspective, comparable Brier scores from the Good Judgment Project experiment were ~0.14 for the best superforecasters and ~0.20 for the rest of the crowd (note: these were reported on a 2 point Brier scale so I’ve divided by 2 for comparability). So the difference in performance (relative error) in that human study was about twice as large as the difference in this simulation study. I think this should give us some confidence when it comes to evaluating strategies.

It’s clear that between the inherent uncertainty, low sample size, and long time horizons inherent in forecasting there will not be a clean optimization cycle that will automatically lead to superhuman performance. Despite this, I think the options laid out here give plenty of opportunity for significant improvement.

Experimentation on a Budget

Like most people participating in forecasting tournaments, this is more of a hobby than a profession. This imposes certain limitations that might be different from an academic study or business environment. In my setting, time and budget constraints effectively rule out back testing. They also limit the degree of scaling and parallel testing, which can rapidly become cost prohibitive. Finally, interim endpoints are generally too labor-intensive to define and implement which limits their broad applicability.

That leaves aggregate-agreement as the primary strategy, with targeted scaling and parallelism to gain power efficiently. This will be my general approach moving forward, though it will be adjusted depending on the specific needs of each individual experiment.